Most enterprises have disaster recovery plans for their mission-critical systems. These plans sit in SharePoint or on network drives, updated annually to satisfy audit requirements. They document backup procedures, recovery time objectives, and failover processes in careful detail. The problem is that most of these plans have never been fully tested under realistic conditions, and many would fail if actually executed during a real disaster.

The gap between documented disaster recovery plans and operational reality is one of the most significant risks in enterprise IT. Organizations assume they can recover from major incidents because they have plans and backups. Then a datacenter loses power, a ransomware attack encrypts production systems, or a failed deployment corrupts critical databases, and the recovery takes three times longer than planned or fails entirely.

This gap exists because disaster recovery planning is typically treated as a compliance exercise rather than an operational capability. The focus is on having documented plans that satisfy auditors, not on building systems that can actually be recovered quickly and reliably under the stress and time pressure of real incidents.

Why Recovery Time Objectives Are Usually Wrong

Most disaster recovery plans specify recovery time objectives, often expressed as targets like “restore operations within four hours of an incident” or “achieve 99.9% availability annually.” These RTOs typically come from business requirements gathered during planning sessions where stakeholders estimate how long they could operate without specific systems.

The problem is that these RTOs rarely reflect the actual technical time required to recover systems. They represent business tolerance for downtime, not technical capability. An organization might need to recover within four hours to avoid a significant business impact, but its actual recovery procedures might require eight or twelve hours to execute properly.

This mismatch creates a planning fiction where documented RTOs satisfy business and audit requirements but cannot be achieved operationally. When real incidents occur, organizations discover that their RTOs were aspirational rather than realistic. The business still experiences extended downtime, and IT teams face blame for failing to meet commitments that were never technically feasible.

The underlying issue is that recovery time depends on factors often not considered during planning. How long does it take to detect that recovery is needed rather than troubleshooting the primary system? How long to make the decision to activate disaster recovery versus continuing repair attempts? How long to coordinate across multiple teams during the chaos of a major incident? How long to validate that recovered systems are functioning correctly before declaring them operational?

These human and organizational factors often consume more time than the technical recovery procedures themselves. Yet they rarely appear in disaster recovery plans, which focus primarily on technical steps and assume smooth coordination and immediate decision-making that does not reflect reality during actual incidents.

The Dependency Problem That Plans Ignore

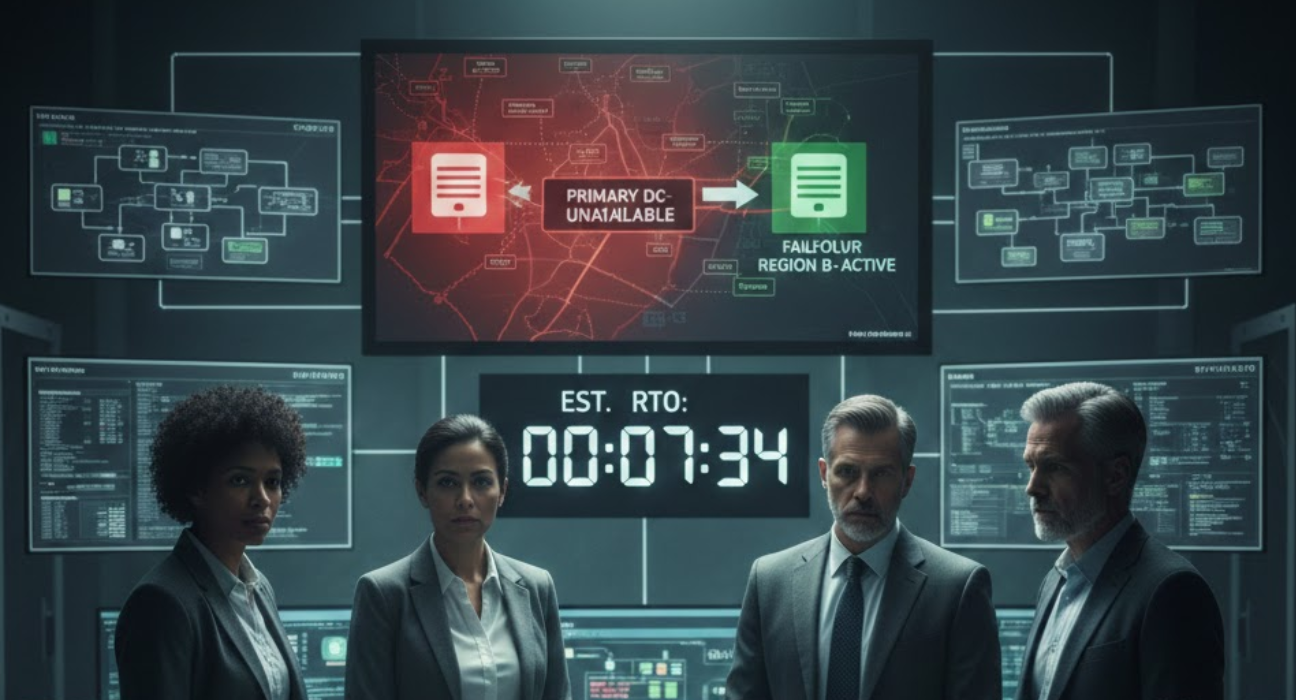

Mission-critical systems rarely exist in isolation. They depend on databases, authentication services, network infrastructure, DNS, load balancers, monitoring systems, and dozens of other components. A comprehensive disaster recovery plan must address all these dependencies and ensure they can be recovered in the correct sequence.

Most plans document the recovery procedures for the primary application but treat dependencies as assumed capabilities. The plan says “restore the database,” but does not specify how to recover the database infrastructure itself if it is also affected by the disaster. It says “configure load balancers” but assumes the load balancer management systems are operational.

When disasters affect entire datacenters or regions, these assumptions break down. Everything must be recovered, often simultaneously, by teams who are overwhelmed and working under intense pressure. Dependencies create sequencing requirements where System A cannot start until System B is operational, but System B depends on System C, which requires System D. Untangling these dependencies during an incident wastes critical time.

The complexity multiplies when systems span multiple datacenters or cloud regions. Geographic redundancy provides resilience, but it requires careful design to ensure that failover actually works. Data must be replicated correctly, configurations must be synchronized, and failover procedures must account for split-brain scenarios where both locations believe they are primary.

Many enterprises discover these dependency issues only during actual incidents or rare comprehensive tests. Their disaster recovery plans document how to recover individual systems but not how to orchestrate recovery of the entire interdependent ecosystem under conditions where normal coordination mechanisms may not be available.

Why Testing Is Harder Than Planning

Disaster recovery testing typically involves restoring backups to non-production environments and validating that applications start correctly. This confirms that backups exist and basic recovery procedures work, but it does not validate that the organization can actually execute a full recovery during a real disaster.

Comprehensive testing requires taking production systems offline or simulating failures that affect real business operations. Most organizations cannot accept this disruption, so they test in ways that do not reflect actual disaster conditions. They restore individual systems rather than entire environments. They test during planned maintenance windows with full staffing rather than at 2 AM when incidents actually occur. They execute tests with detailed preparation rather than under the surprise and time pressure of real disasters.

The result is testing that provides false confidence. The organization believes its disaster recovery capability is validated because tests succeeded, but those tests did not exercise the most difficult aspects of actual recovery. When real disasters occur, problems emerge that testing never revealed because the testing was not realistic enough.

Even organizations that invest in comprehensive testing face challenges with test frequency. A thorough disaster recovery test might take days to execute, require extensive coordination across teams, and risk disrupting business operations if something goes wrong. Doing this quarterly or even annually is difficult. Yet systems change constantly, and recovery procedures that worked six months ago may not work today because underlying infrastructure or dependencies have evolved.

Automated testing helps, but cannot address all scenarios. Infrastructure-as-code and automated deployment pipelines enable rapid environment recreation, which reduces technical recovery time. But they do not test human decision-making, coordination between teams, or escalation procedures. The technical aspects of disaster recovery are often easier than the organizational and process aspects.

The Cost-Benefit Reality

True disaster recovery capability is expensive. It requires maintaining redundant infrastructure that sits idle most of the time. It requires regular testing that consumes operational capacity. It requires designing applications for quick recovery, which adds complexity and development time. It requires training teams and maintaining runbooks that may not be used for years.

Organizations must make deliberate choices about which systems justify this investment. Not every application needs four-hour recovery capability. Internal tools used by small teams during business hours may tolerate day-long recovery times. Customer-facing systems processing revenue transactions cannot.

The difficulty is making these trade-offs explicitly. Many organizations commit to ambitious RTOs for all mission-critical systems without honestly assessing whether the business value justifies the cost. They document aggressive recovery targets that make stakeholders comfortable, but never fund the infrastructure and capabilities needed to achieve them.

A more pragmatic approach involves tiered recovery objectives where truly critical systems receive significant investment in resilience and rapid recovery capability, while less critical systems accept longer recovery times with proportionally lower investment. This requires difficult conversations about what is genuinely mission-critical versus what is merely important.

Financial services and healthcare organizations often have regulatory requirements that mandate specific recovery capabilities regardless of cost. For these organizations, the question is not whether to invest but how to implement required capabilities most efficiently. Even with regulatory mandates, organizations must still make design trade-offs between different technical approaches to achieving required recovery objectives.

How Ozrit Designs Systems for Operational Resilience

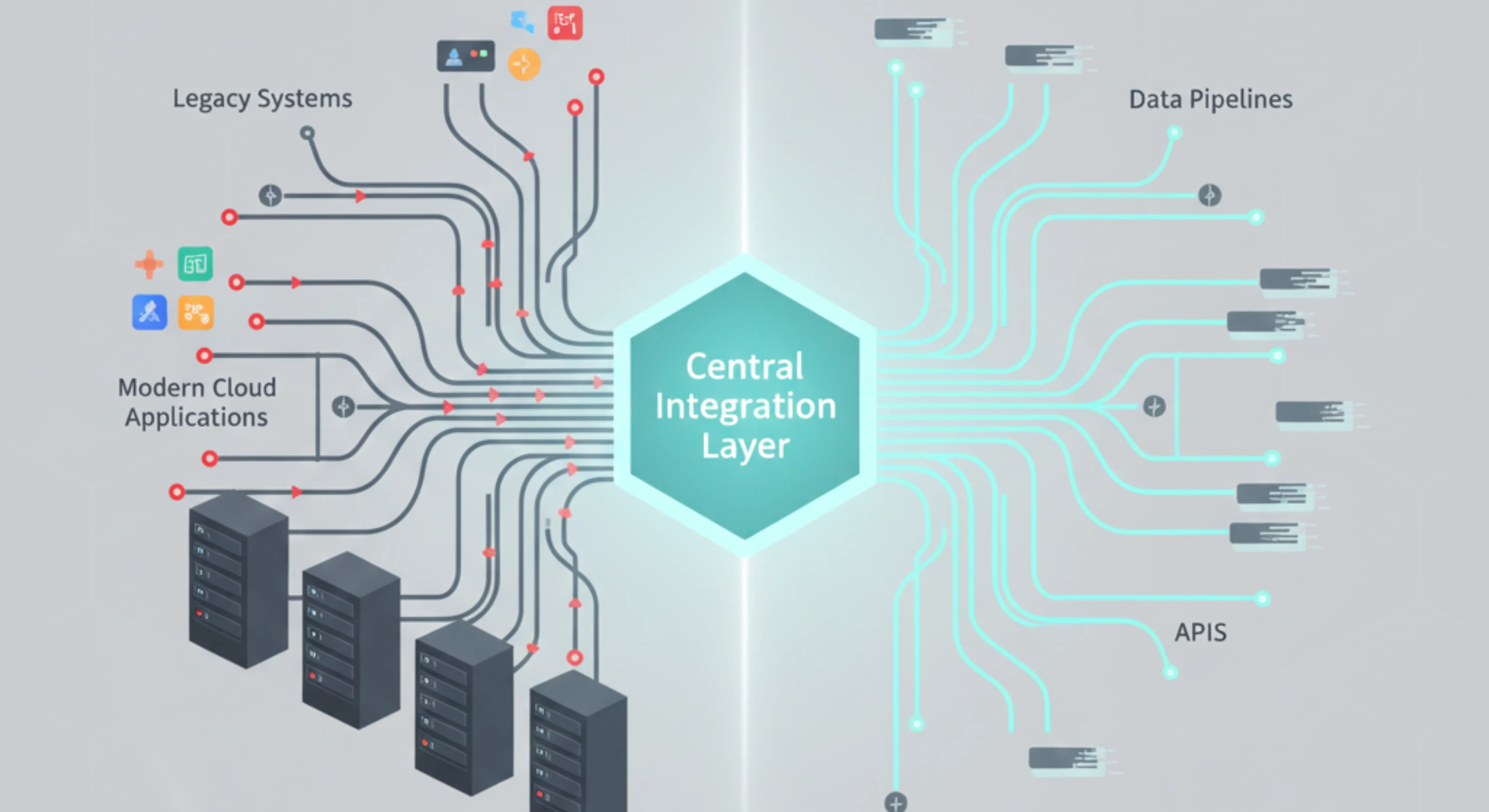

At Ozrit, we approach disaster recovery as an operational capability that must be designed into systems from the beginning, not as documentation produced to satisfy compliance requirements. Our designs focus on reducing recovery time through architecture rather than accepting long recovery periods and trying to compensate through planning.

We structure our teams with infrastructure architects who have operated mission-critical systems through actual disasters. These architects understand the difference between theoretical recovery procedures and what actually works under pressure during real incidents. They design systems based on this operational experience rather than following standard patterns that look good on architecture diagrams but fail in practice.

Our first 30 days on any mission-critical system engagement include comprehensive dependency mapping and single point of failure analysis. We document every system dependency, identify components whose failure would prevent recovery, and design redundancy or rapid recovery procedures for these critical paths. This analysis informs architectural decisions about where to invest in resilience versus accepting risk.

We design recovery procedures to be executable by available staff under realistic incident conditions. This means extensive automation that eliminates manual steps where human error is likely under stress. It means clear decision trees that guide responders through recovery sequencing. It means monitoring that provides unambiguous status information about what is working and what needs recovery attention.

Our approach to recovery time objectives starts with a technical assessment of actual recovery capabilities before making commitments. We measure how long recovery procedures take to execute, including all dependencies and coordination requirements. We identify bottlenecks that extend recovery time and design improvements to address them. We commit to RTOs only after validating that they are technically achievable with the planned architecture and procedures.

We implement disaster recovery with realistic testing as a mandatory component. Our standard approach includes quarterly recovery tests that exercise actual failover procedures, not just backup restoration. These tests run during off-hours to simulate incident conditions more accurately. We document test results transparently, including issues discovered and time required, which provides an honest assessment of recovery capability.

For geographically distributed systems, we design active-active architectures where possible rather than relying on failover. This eliminates recovery time for many failure scenarios because both locations process traffic continuously. When failover architectures are necessary for cost or technical reasons, we implement automated health checking and failover triggers that activate recovery without requiring human decision-making during incidents.

We build comprehensive monitoring and alerting that distinguishes between scenarios requiring recovery versus scenarios requiring repair. Clear signals about incident severity and appropriate response prevent wasted time troubleshooting systems that need recovery rather than repair. This monitoring integrates with incident management systems to ensure appropriate escalation and coordination.

Our disaster recovery implementations include automated runbooks that execute recovery procedures programmatically rather than relying on manual execution of documented steps. These runbooks are maintained as code alongside application code and updated whenever systems change. This ensures recovery procedures remain current rather than becoming outdated as systems evolve.

We structure programs with realistic timelines for implementing production-grade disaster recovery capability. Building truly resilient systems typically requires four to six months beyond basic application development. This includes designing redundancy, implementing failover automation, creating comprehensive monitoring, developing recovery procedures, and conducting thorough testing. We include this timeline in project planning as a core requirement rather than treating disaster recovery as optional or deferrable.

Our teams typically range from 30 to 80 people for mission-critical system implementations, and these teams include dedicated site reliability engineering expertise focused specifically on resilience and recovery capability. This is not a separate workstream but an integrated capability that influences architectural decisions throughout development.

We provide 24/7 support for mission-critical systems, including incident response capability during disasters. When recovery is needed, our teams execute recovery procedures, coordinate with the organization’s incident management, and maintain detailed logs of actions taken during recovery. Our support includes not just monitoring and alerting but active participation in disaster response.

For organizations with existing mission-critical systems that lack adequate disaster recovery capability, we conduct structured assessments that evaluate current resilience, identify specific gaps, and create prioritized remediation plans. We distinguish between quick wins that reduce risk with limited effort and structural improvements that require significant investment but deliver substantially better recovery capability.

Beyond Compliance to Operational Reality

The organizations that handle disasters most effectively are those that treat disaster recovery as an operational discipline rather than a planning exercise. They invest in systems designed for recoverability rather than trying to compensate for fragile systems through detailed plans. They test recovery procedures regularly under realistic conditions and adjust designs based on what testing reveals. They make honest assessments of recovery capability rather than documenting aspirational targets that cannot be achieved.

Disaster recovery planning satisfies auditors and provides psychological comfort to leadership. But when actual disasters occur, operational capability determines outcomes. Systems either recover quickly because they were designed for it, or they do not because plans and documentation cannot substitute for architecture and automation. The gap between planning and operational reality is where business impact occurs, and closing that gap requires approaching disaster recovery as an engineering discipline from the beginning of system design rather than as documentation produced after systems are built.