AI governance sounds like the kind of topic that generates committee meetings and policy documents without much practical impact. But the lack of effective AI governance is causing real problems in large enterprises right now. Multiple teams deploy AI capabilities independently with different standards, inconsistent risk management, and no coordinated oversight. Some implementations create compliance exposure. Others waste resources on redundant efforts. Many fail to deliver value because they were never properly designed for enterprise operations.

The board asks reasonable questions. What AI are we using and where? Who approved these deployments? How do we know they are not creating bias, compliance violations, or operational risk? What happens when something goes wrong? In most organisations, leadership cannot get clear answers because no one has a complete picture of AI deployment across the enterprise.

This creates both immediate risk and strategic problems. The immediate risk is that AI systems already in production might be causing harm the organisation does not know about yet. The strategic problem is that without governance, the enterprise cannot scale AI adoption effectively or capture the full value it should deliver.

Why AI Governance Is Different

Most enterprises have governance for technology projects. There are approval processes for new systems, standards for software development, and oversight for major investments. These frameworks help, but do not adequately address AI-specific challenges.

AI systems behave differently from traditional software. A traditional system does what it was programmed to do, consistently and predictably. An AI system learns patterns from data and applies those patterns in ways that can change over time. The same AI model might perform well when deployed and degrade months later as data patterns shift. Testing AI requires different approaches than testing deterministic software.

AI creates unique risks around bias and fairness. A traditional system applies business rules explicitly defined by developers. If the rules are biased, that is obvious in the code. AI learns patterns from historical data that might contain societal biases, hiring discrimination, or other problematic patterns. The bias is implicit in the model and might not become visible until the AI has made many decisions affecting people.

AI raises questions about accountability that traditional systems do not. When traditional software causes a problem, accountability is usually clear. The bug existed in code someone wrote, or the requirements were wrong, or testing missed something. When AI makes a harmful decision, accountability is less obvious. Is it the data scientist who trained the model? The business owner who deployed it? The vendor who provided the platform? These questions have real consequences when regulators investigate or lawsuits are filed.

The expertise required to evaluate AI is scarce. Most IT teams can assess whether software is well-designed and properly tested. Far fewer can evaluate whether an AI model is appropriate for its intended use, whether training data introduces bias, or whether the approach will remain reliable as conditions change. This knowledge gap makes governance difficult to implement effectively.

What Enterprise AI Governance Must Address

Effective AI governance answers several critical questions across all AI deployments in the organisation.

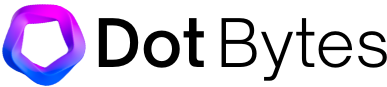

The first set of questions is about inventory and oversight. What AI systems are currently deployed or in development? Who owns each one? What decisions does each AI system make or influence? What data does it use? These questions sound basic, but many enterprises cannot answer them comprehensively. AI capabilities get embedded in various systems by different teams without central visibility.

The second set involves risk assessment and approval. How do we evaluate whether a proposed AI application is appropriate? What risks does it create for the organisation? Who has the authority to approve high-risk AI deployments? What testing and validation are required before deployment? Without clear processes, teams make these judgments inconsistently or not at all.

The third set addresses ongoing operations. How do we monitor AI systems to detect when performance degrades? How do we identify if AI is producing biased outcomes? What triggers the review or suspension of a deployed AI system? Who is accountable for these systems once they are in production? AI requires active management, not just deployment and monitoring.

The fourth set concerns compliance and ethics. How do we ensure AI deployments comply with relevant regulations? How do we prevent AI from making decisions that violate company values or ethical standards? How do we maintain appropriate human oversight? How do we explain AI decisions when required? These questions have different answers in different industries and contexts, but must be addressed systematically.

The final set involves learning and improvement. When AI systems fail or create problems, how does the organisation learn from those incidents? How do we share knowledge about what works and what does not? How do we improve our AI capabilities over time? Without these mechanisms, the organisation repeats mistakes and misses opportunities to build on successes.

The Governance Structures That Actually Work

Effective AI governance requires more than policies and committees. It requires practical mechanisms that help people make good decisions about AI without creating so much process that progress stalls.

Clear ownership starts at the top. Someone at the senior leadership level must own enterprise AI capability and be accountable for how it gets deployed and managed. This is typically the CIO or CTO, but might be a dedicated Chief AI Officer in organisations where AI is central to strategy. This person has the authority to approve high-risk AI applications, set standards, and allocate resources to AI governance.

An AI review board provides cross-functional oversight for significant AI deployments. This board includes technical expertise to evaluate AI approaches, business perspective to assess value and use cases, legal and compliance input to identify regulatory issues, and risk management to evaluate potential harms. The board reviews proposed AI applications, asks hard questions, and either approves them or requires changes before deployment.

Standards and guardrails define acceptable and unacceptable AI practices. These standards address data usage, model development, testing requirements, documentation expectations, and operational controls. They are specific enough to guide decisions but flexible enough to accommodate different types of AI applications. Teams building AI know what is expected and can self-assess compliance before formal review.

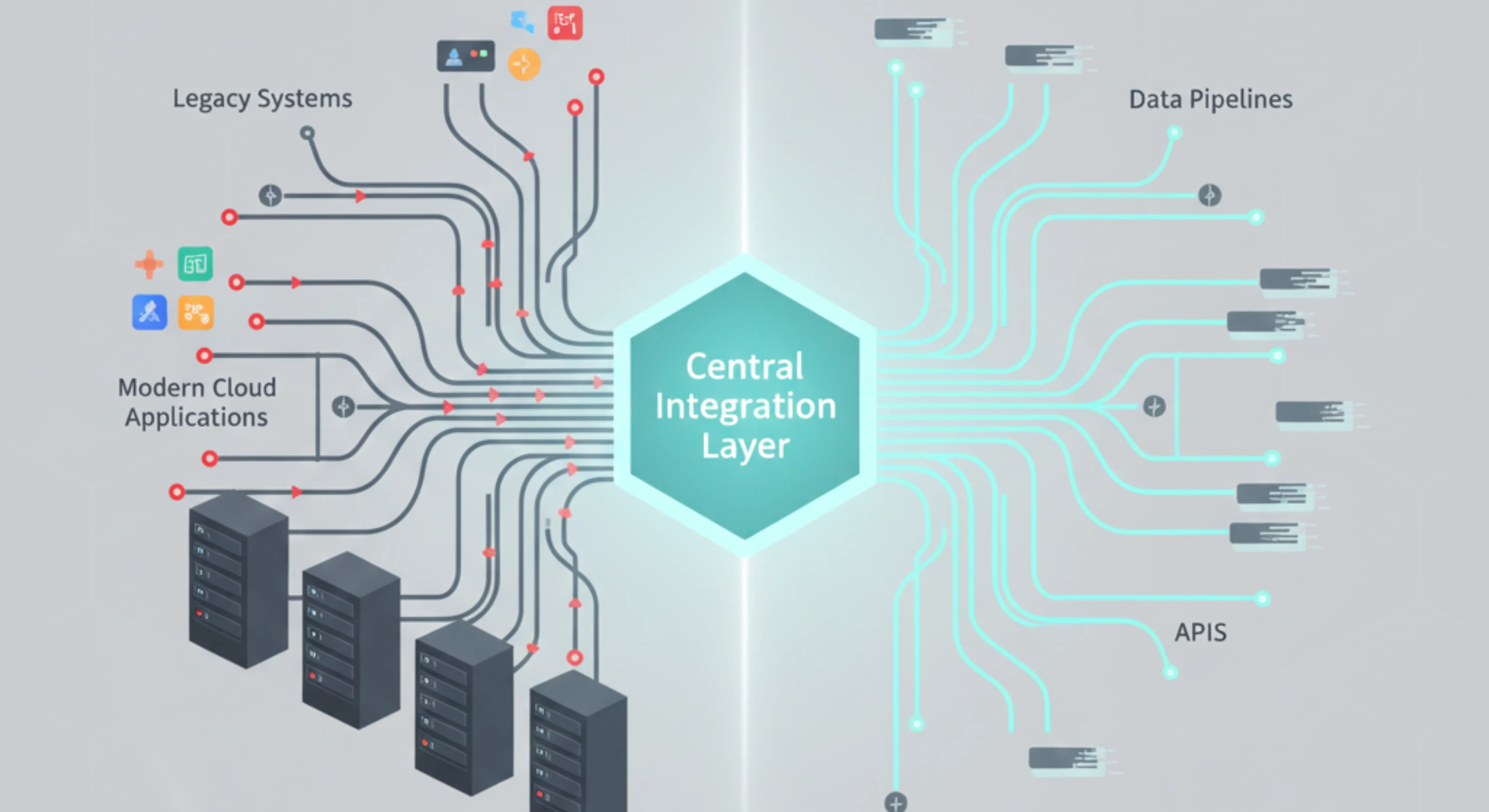

A central AI inventory tracks all deployed and planned AI systems across the enterprise. Each entry includes what the AI does, what decisions it makes, what data it uses, who owns it, and what risk level it represents. This inventory provides the visibility that leadership needs to understand AI deployment and identify gaps or duplications.

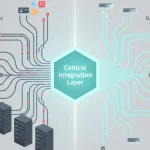

Model operations infrastructure provides the technical foundation for governing AI reliably. This includes version control for models, testing frameworks, deployment pipelines, monitoring capabilities, and incident response procedures. Without this infrastructure, governance policies cannot be enforced practically.

How Ozrit Structures AI Governance

Ozrit builds AI governance capabilities directly into operations platforms rather than treating governance as a separate overlay. The company understands that effective governance must be practical and enforceable, not just documented in policies people ignore.

The platform maintains comprehensive metadata about every AI model deployed within it. This includes what the model does, what data it uses, when it was trained, what testing validated it, who approved deployment, and what monitoring tracks its performance. This metadata provides the transparency governance requires without manual documentation that quickly becomes outdated.

Access controls enforce governance policies through technology. High-risk AI applications require appropriate approval before deployment. Model updates follow required testing and validation before reaching production. Changes to training data or decision thresholds trigger review when necessary. The system prevents governance violations rather than just detecting them after they occur.

Monitoring capabilities track AI performance across multiple dimensions. Technical monitoring shows model accuracy, prediction confidence, and processing performance. Fairness monitoring checks for biased outcomes across demographic groups. Business monitoring tracks whether AI is delivering expected operational improvements. Compliance monitoring validates that AI operates within required parameters. This comprehensive monitoring surfaces issues quickly rather than allowing problems to accumulate.

Audit trails capture complete histories of AI decisions, including what data informed each decision, what the model recommended, whether a human was involved, and what outcome resulted. These trails support regulatory requirements for explainability and help the organisation learn from both successes and failures.

The platform supports human oversight at appropriate points in AI-driven processes. For high-risk decisions, humans can review and override AI recommendations. For routine decisions, sampling mechanisms allow periodic human review to validate that AI is performing correctly. The level of human involvement adapts to risk levels and regulatory requirements.

Implementation That Builds Governance In

Ozrit structures AI governance implementations to address both immediate needs and long-term capability development. The approach begins with a governance assessment, typically four to six weeks, that inventories current AI deployments, evaluates governance gaps, assesses risk exposure, and identifies quick wins alongside strategic improvements.

The implementation typically starts with establishing basic governance infrastructure like the AI inventory, risk classification framework, and approval processes. This creates immediate visibility and control over new AI deployments while the organisation works on more comprehensive capabilities.

Subsequent phases implement monitoring capabilities, develop detailed standards, build model operations infrastructure, and establish ongoing governance processes. Each phase delivers practical improvement in the organisation’s ability to deploy AI responsibly while managing risk appropriately.

A realistic timeline for meaningful AI governance capability is 6 to 12 months for focused programs that address immediate gaps and establish core infrastructure, or 12 to 18 months for comprehensive governance spanning the full enterprise. These timelines assume reasonable organisational commitment to governance as a priority. Delays typically come from competing priorities or difficulty reaching consensus on policies rather than technical implementation challenges.

Ozrit assigns senior AI governance specialists to these programs because establishing effective governance requires both technical expertise and organisational judgment. These people have implemented AI governance before and know which approaches work in practice versus which look good on paper but fail in execution. They work with legal, compliance, risk management, and business stakeholders to develop governance that satisfies everyone’s legitimate concerns without becoming unworkable bureaucracy.

Balancing Control and Innovation

The perpetual tension in AI governance is between control and innovation. Too much governance and teams cannot move quickly enough to capture AI opportunities. Too little governance and the organisation accumulates risk that eventually causes serious problems.

The right balance depends on the organisation’s industry, risk tolerance, and regulatory environment. Financial services and healthcare need more stringent governance than retail or manufacturing. Organisations with low risk tolerance need tighter controls than those comfortable with experimentation.

Ozrit helps organisations find an appropriate balance by implementing tiered governance. Low-risk AI applications like operational optimisation or internal process automation get streamlined approval and lighter oversight. High-risk applications like customer-facing decisions or regulatory compliance get rigorous review and continuous monitoring. Medium-risk applications fall between these extremes. This approach focuses governance effort where it matters most, rather than treating all AI the same.

The governance also evolves as the organisation matures in AI capability. Early in AI adoption, governance might be more restrictive because the organisation is still learning what works and building capability. As experience grows and infrastructure improves, governance can become more efficient while maintaining appropriate control.

Operating Governance Continuously

AI governance is not a one-time implementation. It requires ongoing attention as AI deployments grow, regulations evolve, and the organisation learns from experience. The governance framework must adapt to remain relevant and effective.

Regular governance reviews assess whether current policies and processes are working. Are approval processes causing unnecessary delays? Is monitoring catching issues effectively? Are standards clear and being followed? These reviews identify improvements based on actual experience rather than theoretical considerations.

Incident reviews analyse what went wrong when AI systems fail or cause problems. These reviews focus on learning rather than blame, identifying what governance mechanisms should have prevented the issue and what improvements would reduce similar risks in the future. This learning feeds back into standards, monitoring, and approval processes.

The 24/7 support for Ozrit platforms includes governance-aware engineers who understand compliance requirements and can respond to issues without creating governance violations. When problems occur with AI systems, they address both the technical issue and any governance implications, ensuring that fixes meet operational and compliance needs.

The Board’s Role in AI Governance

Boards increasingly face questions about AI governance from regulators, investors, and stakeholders. Directors need enough understanding to provide meaningful oversight without trying to manage technical details.

The board should expect regular updates on AI deployment across the enterprise, significant risks that AI creates, how governance is managing those risks, and any incidents that occurred and what was learned. These updates should be clear and factual, focusing on business implications rather than technical complexity.

Directors should ask informed questions. How confident are we that our AI systems are not creating bias? What happens when AI makes a wrong decision? How quickly would we know if an AI system was malfunctioning? Are we complying with relevant AI regulations? The quality of answers reveals whether governance is actually working.

The board’s role is ensuring leadership takes AI governance seriously, allocates appropriate resources, and addresses issues when they arise. This oversight is becoming essential as AI becomes more central to enterprise operations and as regulators increase scrutiny of AI deployment.

Why This Matters Now

AI adoption is accelerating across enterprises. Without effective governance, this creates accumulating risk that will eventually manifest as regulatory penalties, reputational damage, operational failures, or discriminatory outcomes that harm people. The organisations that implement strong AI governance now position themselves to scale AI adoption confidently. Those who delay face the choice between constraining AI use to manage risk or accepting exposure that will eventually cause serious problems.