The introduction of AI into enterprise systems is no longer a question of “if” but “when” and “how.” For C-level executives overseeing mid-to-large enterprises, particularly those operating in India’s complex regulatory and operational environment, this shift brings a new set of responsibilities that go far beyond technology selection.

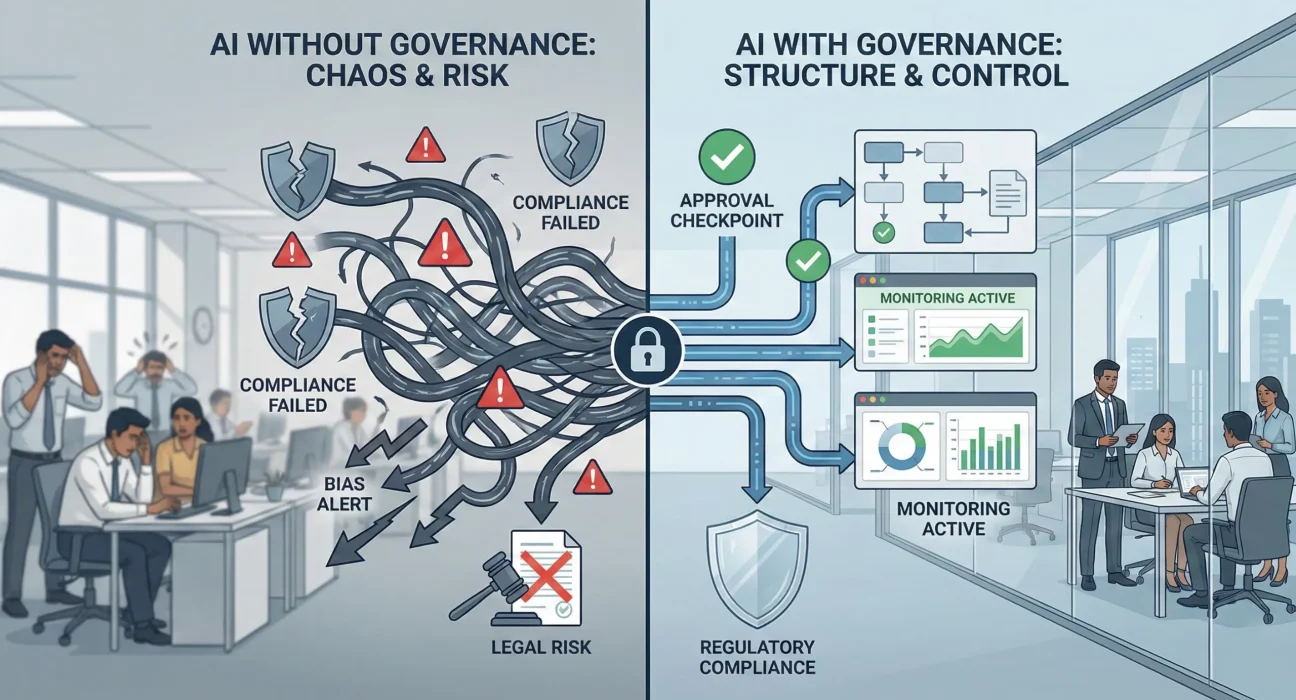

AI is not just another software module. It makes decisions, influences outcomes, and operates with a degree of autonomy that traditional systems do not possess. When deployed at enterprise scale, AI systems touch customer data, employee information, financial transactions, and strategic operations. The governance and ethical frameworks around these systems often determine whether they become strategic assets or costly liabilities.

The challenge is that most organisations are still treating AI deployment as a technology problem when it is fundamentally a governance and execution problem. The hard part is not building the model. The hard part is ensuring it behaves responsibly, complies with regulations, integrates with legacy systems, and continues to deliver value over time without creating new risks.

Why AI Governance Fails in Most Enterprise Deployments

Large enterprises have spent decades building governance frameworks for traditional IT systems. These frameworks assume predictability. They assume you can test every scenario, document every decision path, and maintain full control over system behaviour.

AI breaks these assumptions.

A machine learning model trained on historical data will behave differently when real-world conditions change. A recommendation engine might inadvertently discriminate. A fraud detection system might flag legitimate transactions from new customer segments. These are not bugs in the traditional sense. They are emergent properties of how AI systems learn and adapt.

Most enterprise AI failures do not happen because the technology was bad. They happen because:

Ownership was unclear. IT built the model, business defined the requirements, legal reviewed compliance, and risk management signed off on controls. But no single person was accountable for what the AI actually did in production.

Governance was bolted on later. Organisations piloted AI in isolated environments, saw promising results, and then rushed to scale without building the foundational governance structures needed for enterprise deployment.

Ethics became a checkbox exercise. Companies created AI ethics committees, published principles, and conducted bias audits. But these activities rarely translated into operational controls that product teams could actually implement.

Monitoring was insufficient. Traditional application monitoring tracks uptime and performance. AI systems need monitoring for drift, fairness, explainability, and alignment with business intent. Most enterprises lack the tooling and processes to do this effectively.

Vendors overpromised and underdelivered. Many AI vendors sell outcomes without explaining the governance burden that comes with those outcomes. Enterprises sign contracts expecting plug-and-play solutions and discover they need dedicated teams to manage model lifecycle, data quality, and compliance.

The gap between AI pilots and AI in production is enormous. Pilots run in controlled environments with clean data and narrow use cases. Production systems run in messy environments with incomplete data, integration dependencies, and constantly changing requirements. Governance is what bridges that gap.

The Real Governance Challenges Enterprises Face

Enterprise AI governance is not an abstract concept. It is a series of very practical decisions that must be made before, during, and after deployment.

Data Governance and Quality

AI systems are only as good as the data they are trained on. In large enterprises, data exists across multiple systems, geographies, and formats. Customer data might be stored in CRM systems, transaction systems, legacy databases, and third-party platforms. Employee data might span HR systems, access logs, and productivity tools.

Before any AI model can be deployed, someone needs to answer these questions: Where does the training data come from? Who owns it? How often is it updated? What happens when data quality degrades? How do you ensure consistency across regions and business units?

Most enterprises discover these questions too late. They build models on sample datasets that do not reflect production complexity. When the model goes live, performance drops because real-world data does not match training data. This is not a technology problem. It is a governance problem.

Compliance and Regulatory Requirements

India’s Digital Personal Data Protection Act, 2023 has fundamentally changed how enterprises must handle customer and employee data. AI systems that process personal data must now comply with consent requirements, data localisation mandates, and strict controls on automated decision-making.

Global enterprises operating in India face an even more complex landscape. They must comply with DPDP Act requirements while also meeting GDPR standards in Europe, CCPA requirements in California, and industry-specific regulations across different markets.

The challenge is that AI models often need access to personal data to function effectively. Recommendation systems need purchase history. Credit scoring models need financial data. HR analytics need employee information. Balancing AI performance with privacy compliance requires careful architectural decisions, not just legal reviews.

Enterprises that treat compliance as a one-time approval process inevitably run into trouble. Regulations evolve. AI models retrain. Business requirements change. Governance frameworks need to account for this continuous evolution.

Bias, Fairness, and Explainability

AI bias is not just an ethical concern. It is a business risk and a legal liability. An AI system that systematically disadvantages certain customer segments can lead to regulatory action, reputational damage, and revenue loss.

The problem is that bias is often invisible until it causes harm. A hiring algorithm might seem neutral but systematically filter out qualified candidates from certain backgrounds. A loan approval system might appear data-driven but replicate historical patterns of discrimination.

Detecting and mitigating bias requires ongoing monitoring, not just initial testing. It requires diverse teams who can spot problems that might not be obvious to algorithm designers. It requires business leaders who understand that fairness is not just about legal compliance but about long-term customer trust.

Explainability is equally important. When an AI system makes a decision that affects customers or employees, someone needs to be able to explain why. Regulators expect it. Customers demand it. Internal stakeholders need it to trust the system.

But explainability comes with trade-offs. The most accurate AI models are often the least interpretable. Enterprises must decide where they sit on that spectrum for each use case. A fraud detection system might prioritise accuracy over explainability. A loan rejection system might need to prioritise explainability over marginal accuracy gains.

These are not technical decisions. They are governance decisions that require input from legal, risk, business, and technology teams.

Vendor and Third-Party Risk

Most enterprises do not build AI systems entirely in-house. They rely on cloud platforms, pre-trained models, third-party APIs, and consulting partners. This creates a web of dependencies that must be governed.

What happens when a third-party AI service changes its model and suddenly your application behaves differently? What happens when a vendor’s data practices do not align with your compliance requirements? What happens when a critical AI component becomes unavailable or is discontinued?

Vendor management for AI is different from traditional software vendor management. You are not just licensing code. You are integrating systems that learn, adapt, and make decisions. Contracts need to address model performance guarantees, data usage rights, audit capabilities, and transition plans.

Enterprises that have built mature vendor governance frameworks for traditional IT often find those frameworks inadequate for AI vendors. The questions are different. The risks are different. The contractual controls need to be different.

What It Actually Takes to Get AI Governance Right

Building effective AI governance is not about creating more policies or hiring more compliance staff. It is about embedding governance into the delivery process from day one.

Clear Ownership and Accountability

Every AI system in production needs a named owner who is accountable for its behaviour. Not the technology stack. Not the vendor relationship. The actual business outcomes and risks created by the AI system.

This owner needs to have authority across IT, legal, risk, and business functions. They need budget, decision-making power, and direct access to senior leadership. Without this level of ownership, AI governance becomes a coordination exercise that nobody truly controls.

In practice, this means creating new roles or expanding existing roles. Some enterprises appoint AI product owners who sit between technology and business. Others create cross-functional AI governance boards with clear escalation paths. The structure matters less than the accountability.

Governance as Part of Delivery, Not Separate from It

Traditional governance often operates as a gate. Projects submit documentation, governance reviews it, approval is granted or denied. This model breaks down for AI because AI systems evolve continuously. A model that was approved last quarter might behave differently this quarter because it retrained on new data.

Effective AI governance is embedded in the delivery lifecycle. It includes:

Design-time controls: Architecture reviews that assess data sources, model selection, and integration patterns before development begins.

Development-time controls: Code reviews, bias testing, and security assessments as part of the standard development process.

Deployment-time controls: Production readiness reviews that verify monitoring, rollback capabilities, and incident response procedures.

Runtime controls: Continuous monitoring of model performance, fairness metrics, and compliance indicators with automated alerts and manual review triggers.

This requires governance teams to work alongside delivery teams, not above them. It requires tooling that makes governance visible and measurable. It requires processes that can keep pace with agile development without becoming bottlenecks.

Partners who understand enterprise delivery realities can be instrumental here. Ozrit, for instance, brings experience in integrating governance into execution workflows rather than treating it as a separate compliance function. The focus is on building governance capabilities within delivery teams, not creating parallel governance bureaucracies.

Transparency and Communication

AI systems often fail not because of technical problems but because stakeholders do not understand what they do, how they work, or when they should escalate issues.

Business leaders need to understand the limitations of AI systems they are asked to approve. They need to know that a 95% accuracy rate means 5% of decisions will be wrong and what the consequences of those errors might be.

Technology teams need to understand the business context in which AI operates. They need to know which customer segments are most sensitive to algorithmic decisions and which regulatory requirements cannot be compromised.

Legal and compliance teams need to understand how AI systems actually function, not just what vendors claim they do. They need access to model documentation, training data sources, and performance metrics.

This level of transparency requires intentional communication practices. Regular business reviews that cover AI performance and risks. Documentation that is actually readable by non-technical stakeholders. Incident response processes that include both technical and business leadership.

Continuous Monitoring and Adaptation

AI governance is not a set-it-and-forget-it process. Models drift. Data changes. Business requirements evolve. Regulations get updated.

Enterprises need monitoring systems that track not just technical metrics but business outcomes. Is the AI system still delivering the value it was designed to deliver? Are there new patterns of errors or bias? Are customers or employees raising concerns?

This monitoring needs to trigger action, not just reports. When a model starts drifting, who decides whether to retrain it or take it offline? When a fairness metric crosses a threshold, what is the escalation path? When a regulatory requirement changes, how quickly can the AI system be updated?

The enterprises that excel at AI governance treat it as an operational discipline, not a project phase. They invest in tooling, training, and processes that make governance sustainable over time.

The Role of Leadership in AI Governance

Technology teams can build governance frameworks, but only leadership can make them effective.

CEOs and business leaders set the tone. When leadership treats AI governance as a compliance checkbox, the entire organisation follows suit. When leadership treats it as a strategic capability that enables responsible innovation, teams invest accordingly.

CIOs and CTOs own the technical architecture, but they also own the organisational structures that support AI delivery. Do teams have the skills they need? Are incentives aligned with long-term value rather than short-term deployment speed? Are there career paths for people who specialise in AI governance and ethics?

CFOs control budgets and should be asking hard questions about AI ROI. Not just the cost of building models but the cost of governing them, monitoring them, and maintaining them over time. AI systems that lack proper governance often become more expensive to maintain than traditional systems because they require constant intervention and remediation.

Chief Risk Officers and General Counsel need to be involved early, not just at approval gates. They need to help shape AI strategy, not just review AI projects. Their input on risk tolerance, compliance requirements, and ethical boundaries should inform design decisions, not just deployment decisions.

Chief Data Officers, where they exist, play a critical role in AI governance. They sit at the intersection of data quality, data privacy, and data-driven decision-making. Their ability to build enterprise-wide data governance capabilities directly impacts AI success.

The most successful AI deployments have visible executive sponsorship that goes beyond budget approval. Executives who ask questions. Executives who hold teams accountable for governance outcomes. Executives who are willing to slow down or pause deployments when governance gaps are identified.

Choosing Partners Who Understand Execution, Not Just Technology

Many enterprises assume that AI expertise means hiring vendors with the best algorithms or the most sophisticated platforms. But enterprise AI success depends more on execution maturity than technical sophistication.

The right partners understand that AI deployment is a change management challenge as much as a technology challenge. They know how to navigate legacy system integration, stakeholder alignment, and regulatory complexity. They have delivered enough enterprise programs to anticipate the problems that only become visible in production.

When evaluating AI partners, executives should ask different questions than they ask when buying traditional software:

How do you handle governance and compliance in production, not just in pilots?

What happens when model performance degrades? What is your process for retraining, testing, and redeployment?

How do you manage bias and fairness monitoring over time?

What does your incident response process look like when an AI system behaves unexpectedly?

How do you document and explain AI decisions to non-technical stakeholders?

Partners who have real answers to these questions, backed by actual enterprise delivery experience, are worth far more than partners who promise the latest technology without the operational maturity to sustain it.

Ozrit‘s approach to enterprise AI reflects this execution-first mindset. The focus is not on selling AI capabilities but on building delivery frameworks that ensure AI systems can be governed, monitored, and sustained over years, not just months. This means investing in documentation, training, knowledge transfer, and operational processes that outlast any individual project.

What Good AI Governance Looks Like in Practice

Governance frameworks can feel abstract until you see them in action. Here is what effective AI governance looks like in a real enterprise environment:

Before any AI system goes into production, there is a clear document that answers: What business problem does this solve? What data does it use and where does that data come from? What are the known limitations and risks? Who is accountable for its behaviour? What are the rollback procedures if something goes wrong?

During development, there are regular reviews where business, legal, and technology teams discuss not just progress but risks, dependencies, and assumptions. Bias testing is not a final gate but an ongoing activity. Model documentation is maintained as code changes.

At deployment, there is a production readiness review that covers not just technical infrastructure but operational processes. Who monitors the model? What metrics matter? What triggers an alert? What are the escalation paths? How is performance communicated to business stakeholders?

After deployment, there is continuous monitoring that tracks both technical performance and business outcomes. When issues arise, there are clear processes for investigation, remediation, and communication. When models need retraining, there are approval workflows that verify data quality and test results before redeployment.

And critically, there is a regular governance review where senior leadership assesses the entire AI portfolio. Which systems are delivering value? Which systems have accumulated technical debt? Which systems face new regulatory risks? Where should investment increase and where should it decrease?

This is not theoretical. This is what mature enterprises actually do when they take AI governance seriously.

Moving Forward with Confidence

AI will continue to reshape enterprise operations. The organisations that succeed will not be those with the most advanced algorithms. They will be those with the governance maturity to deploy AI responsibly, sustainably, and at scale.

This requires investment, not just in technology but in people, processes, and organisational capabilities. It requires leadership that understands the difference between innovation theatre and operational excellence. It requires partners who have actually delivered complex enterprise programs and understand what it takes to make them work.

The good news is that enterprises do not need to figure this out alone. The patterns of successful AI governance are becoming clearer. The tooling is improving. The regulatory landscape, while complex, is also becoming more defined.

What matters now is execution. Building governance frameworks that actually work in production. Creating accountability structures that survive organisational change. Developing monitoring capabilities that catch problems before they become crises. Training teams to think about AI as a governed capability, not just a technology feature.

For C-level executives, the question is not whether to invest in AI governance but how quickly you can build the maturity to do it well. Because in the long run, the enterprises that govern AI effectively will outcompete those that do not. Not because governance is glamorous, but because it is what makes AI sustainable, compliant, and valuable over time.

The competitive advantage belongs to those who execute, not just those who experiment.